|

InfoSphere DataStage: Parallel Framework Standard Practices September 2010 International Technical Support Organization SG24-7830-00. Top 50 Datastage Interview Questions & Answers (2021 Update) 1) Define Data Stage? A data stage is basically a tool that is used to design, develop and execute various applications to fill multiple tables in data warehouse or data marts. Kenworth navplus manual. It is a program for Windows servers that extracts data from databases and change them into data warehouses.

General Stage

Annotation: are like comments for a DataStage jobs. They are used in jobs to provide information about the job

Container: As the name suggests are used to contain or hold a group of stage. In cases where the transformation logic involves a lot of stages, container can be used to hold all the transformation logic inside them. This will make the job look a little less complex for new developers.

Here the transformation logic is inside the container stage. It will be opened when you double click on the container stage.Note that it has “Input” and “Output”; this means that the Container stage can handle only a single input and single output. To make the above container Shared, right click on the container and select the option “Convert to Shared”. By doing this, we are making the transformation logic inside the container available for use by any job in the project. Description Annotation: is used to enter the description about the job. Here is an example

Link: is used as a connector between two stage. It shows the direction of flow of data in a DataStage job.

Database Stages

These stages are used to connect to various databases. Most of the stages that you see below allow native connectivity to the respective databases.

In cases when native connectivity is not available we use the ODBC stage or Dynamic RDBMS stage

ODBC Stage: Extracts data from or loads data into databases that support the industry standard Open Database Connectivity API

Dynamic RDBMS Stage: is a database stage that performs read write operations on the following list of databases

Informix, UDB/DB2, Microsoft SQL/Server, Oracle and Sybase. Note: We can also use ODBC connection string to establish a connection to a database.

Stored Procedure: stage is dedicated to run stored procedure as the other database stages do not allow this.

Stages used most frequently will be explained in the next lessons.

Development/Debug StagesAs the name suggests, these stages are used for testing and debugging. Column Generator: Is used to generate columns

Head: is used to get the n records starting from the first record. Additionally by specifying other options we can also get the n’th record starting from the 1st record.

Peek: is an output stage used in debugging. In instances where we have transformed the data and would like to check if the transformation is working properly before loading data to the target, we use a peek stage. The records that are sent to Peek stage can be either viewed in the job log in Director or can be written to a file.

Row Generator: as the name suggests is used to generate rows of data

Sample: Will take a random subset of input data that it receives and distributes it among output links

Tail: is used to get the n records starting from the last record.

Write Range Map: is used to create a Range Map that can be used while performing range partition.

File StagesThese are used to read and write data to various file systems Complex Flat File:This is used to read a file or write to a file, but cannot be used to do both at the same time. It can have multiple output links and a single reject link. It can be used to read data from one or more complex flat files like MVS data sets with QSAM and VSAM files. We can also read data from files that contain multiple record types.

Data Set stage is used to create Data Sets. These are operating system files and are referred by a control file which has the extension .ds. Data Sets are only readable from within DataStage and are commonly used to improve performance. It allows only a single input or a single output and unlike sequential files they can be executed in parallel

External Source stage allows us to interact with or call external programs. This stage is mostly used to interface with source that are not currently supported by DataStage. It can have a single output link and single reject link

External Target stage like external source stage is used to write data to source that are currently not supported by DataStage. It can have a single input link and a reject link.

File Set stage is used to create files and save them with the extension .fs. It is useful because most flat files have a 2GB limit and using flat file stage we can store data that is more than the limit. Unlike the sequential file stage, this stage runs only in parallel mode. The difference between Data Set and File Set is that, File Set stores the formatting information that describe the format of the files to be read or written.

Lookup File Set stage is used to create file sets that are going to used with Lookup Stage. The extension for files created by this stage is also .fs. Using a Lookup File Set to perform a lookup will result in a significant increase in performance when compared to other stages. The stage can have a single input link and an output link that must always be a reference link

Example:

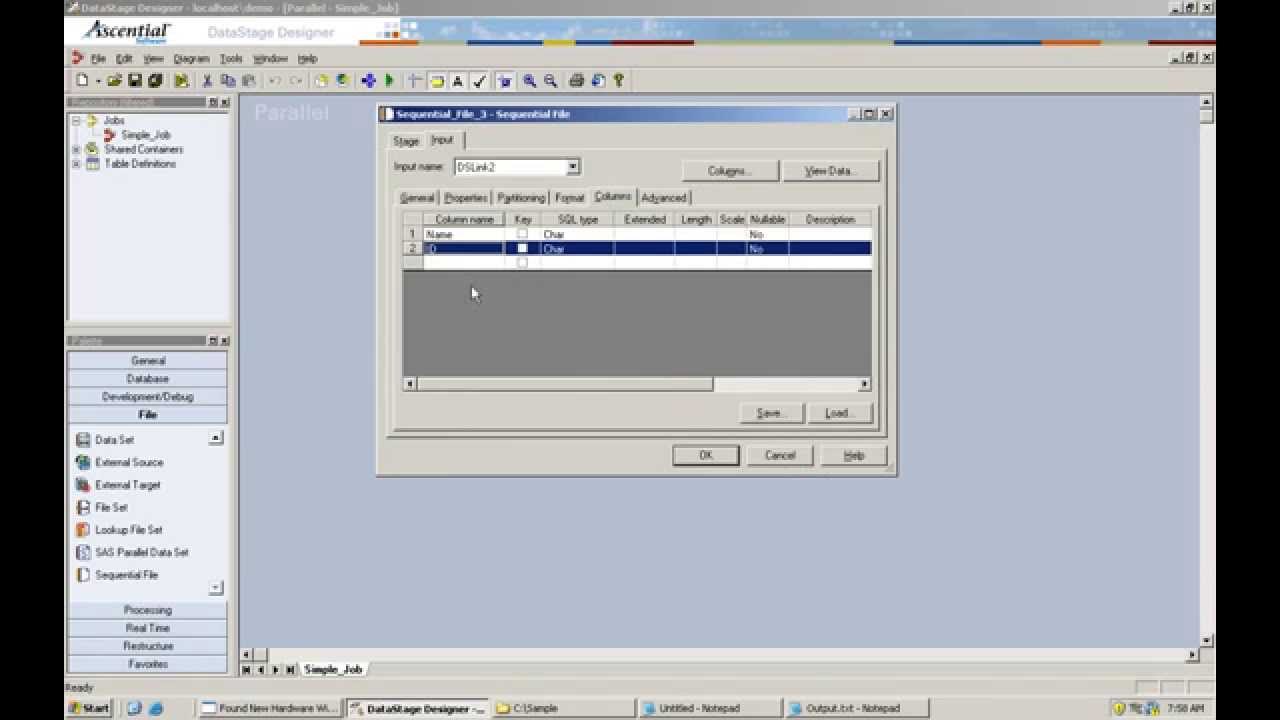

Sequential File is used read and write data to a single or multiple flat files. It is one the most commonly used stages in DataStage. It can have a single input link or a single output link and a reject link. This stage executes in parallel mode when extracting data from multiple files but will run sequentially when extracting from a single file.

Complex Flat File Stage Datastage Example Programs PdfProcessing Stages

These are the stages that perform all the transformations in DataStage. Let us briefly see what each one of the does.

Please note the most frequently used stages will be explained in detail with examples in the next lessons

Aggregator: stage as the name suggests is used to perform aggregations and grouping functions. Here are some functions that can be performed using Aggregator stage – Sum, Average, Max value, Min value, Group by and more.

Change Capture stage is used to identify the differences between two streams of data. One link is assigned as the before link and the other link is after link. Based on the comparison done using the defined key fields, the stage generates the following codes 0,1,2,3,4

0 -> (duplicate) denotes the record is a copy

1 -> (new record) denotes its a new record and has to be insert 2 -> (delete) denotes the record can be deleted delete 3 -> (edit/modify) denotes the record exists but the value field in after link is different from the one in before link.

Change Apply stage reads a record from the change data set and from the before data set, compares their key column values, and acts accordingly (Insert, Delete, Edit, Copy).

Compare and Difference stages as the names suggest are used to compare the difference between two records based on key values

Copy stage can create multiple output links for a single input link.

Ex: If our input data has only one record Name Age Sex ABC 20 F We can use this stage to create any number of duplicates for this record

Decode and Encode stages are used to encode the data based on a key value and they decode it based on the same value.

Expand stage is used to extract zipped content/files.

Filter stage is used to segregate records to different links based on filer conditions

Funnel stage is used to collect records from multiple input links and funnel them to a single output link.

Join, Lookup and Merge are very useful stages and are used to perform all kinds of joins on data. They differ in memory utilization and number of output & reject links that they allow.

will be explained in detail with examples

Modify stage is used to modify the meta data of the columns.

Pivot stage is used to convert rows to columns and columns to rows

Remove Duplicates stage as the name suggests is used to remove duplicate records from the input data.

Slowly Changing Dimension stage is used to implement SCD type 1 & 2 in datastage jobs

Sort stage is used to sort input data and send it to the output. It can also remove duplicates.

Switch stage takes a single input and assigns each input row to an output link based on the value of a selector field. The Switch stage is similar to Switch statement in C or SQL.

Transformer stage is probably the most used stage in DataStage. It has built-in transformation functions and additional ones can also be created by user (Routines)

Example case

Logic pro x for mac 10.6.8. The input file in our scenario is a stream of records representing invoices. It is a text file in a header-trailer format and has the following structure: - A header starts with a letter H which is followed by an invoice number, a customer number, a date and invoice currency - Every item starts with a letter I which is followed by product ID, product age, quantity and net value - And the trailer starts with a T and contains two values which fullfill the role of a checksum: the total number of invoice lines and total net value. Effect filmora gratis.  Solution

Datastage job design which solves the problem of loading an extract structured into headers and items: Detailed illustration of each component of the jobtrsfGetKinds transformer - a type of record is extracted from each input line and assigned and written into a kind column

The transformer reads first character of each line to mark records and divide them into kinds:

Complex Flat File Stage Datastage Example Programs ListtrsfAssignHeaders transformer assigns invoice headers to each line of the input flow. Invoice header is a 8 character key which is extracted from the dataline using the following formula: L02.data_line[1,8]

A datastage transformer which assigns headers using a stage variable: trsfReformat transformer splits the data flow into invoice headers and invoice lines. A InvoiceLineID stage variable is used to populate a sequence number for each invoice line. In order to correctly load net value, the ereplace function replaces commas with dots in this numeric field.

The following transformer splits the flow into headers and items and reformats records into a corresponding structure:

Target oracle tables with correctly loaded invoice headers and lines:

Comments are closed.

|

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed